No products in the cart.

We used the J, M, and V letter datasets for a classification task in the previous blog. We performed the classification by directly coding. Now let us see how we can do it in the Classification Learner app. In coding, we used only the KNN method, and we did not try to improve accuracy. But in the app, we can use Classification Learner to train models of these classifiers: decision trees, discriminant analysis, support vector machines, logistic regression, nearest neighbors, naive Bayes, and ensemble classification. In addition to training models, we can explore the data, select features, specify validation schemes, and evaluate results. We can export a model to the workspace to use the model with new data or generate MATLAB code to learn about programmatic classification.

The following are the steps that we will be following to perform classification in the Classification Learner app:

1) Our first step before we open the app will be to import the data we will use in the workspace. I have used the same data features of “J”, “M” and “V” that I had used in the previous blog of classification learning. The features.xlsx file that contains the data has been extracted to the workspace.

2) Now, we have to click the classification learner available in the Apps tab in MATLAB. It will be automatically available if you have installed the Statistics and Machine Learning toolbox. We can also open the app by entering classificationLearner in the command prompt.

3) This is how the app looks like after opening. To start the session, we need to click on the New Session icon.

4) A new window named New Session will open. Since the data is already in the workspace, it will be shown in the option, and we have to select it.

As the features are selected in the dataset part, it will automatically identify the response and predictors. The response indicated here is the parameter into which we will classify the data. Here, since the Character is selected as the response and it shows it has three unique values that are “J”, “M” and “V”. Predictors are the features that we use to classify them into the respective classes. The other two columns that are Aspect Ratio and Duration, will automatically become the predictors.

Before going into the validation part, we shall see what validation is and its parts. In validation, the training data is divided into training and validation parts. The validation part is used to check the trained model before testing to see that the model doesn’t get overfitted. There are two different types of validation processes:

Cross-Validation: In this, the number of folds is the number of parts the training data will randomly divide. One part of it will be used for validation and the remaining for training.

Holdout Validation: We can select the percentage of data held out as a testing dataset and the remaining for training. This is usually recommended for large datasets.

Since the dataset that I am using is small, I am not using validation. If I use validation, then, the training data will not be sufficient to learn.

5) This is how the app will look after selecting the dataset and validation method. Initially, the scatter plot of the training data features will appear as the session is opened. The X and Y-axis of the scatter plot can be changed on the right side.

6) Since we don’t know which model suits the dataset, we will select all options here. After this, we have to click the train option to train the testing data for all available models.

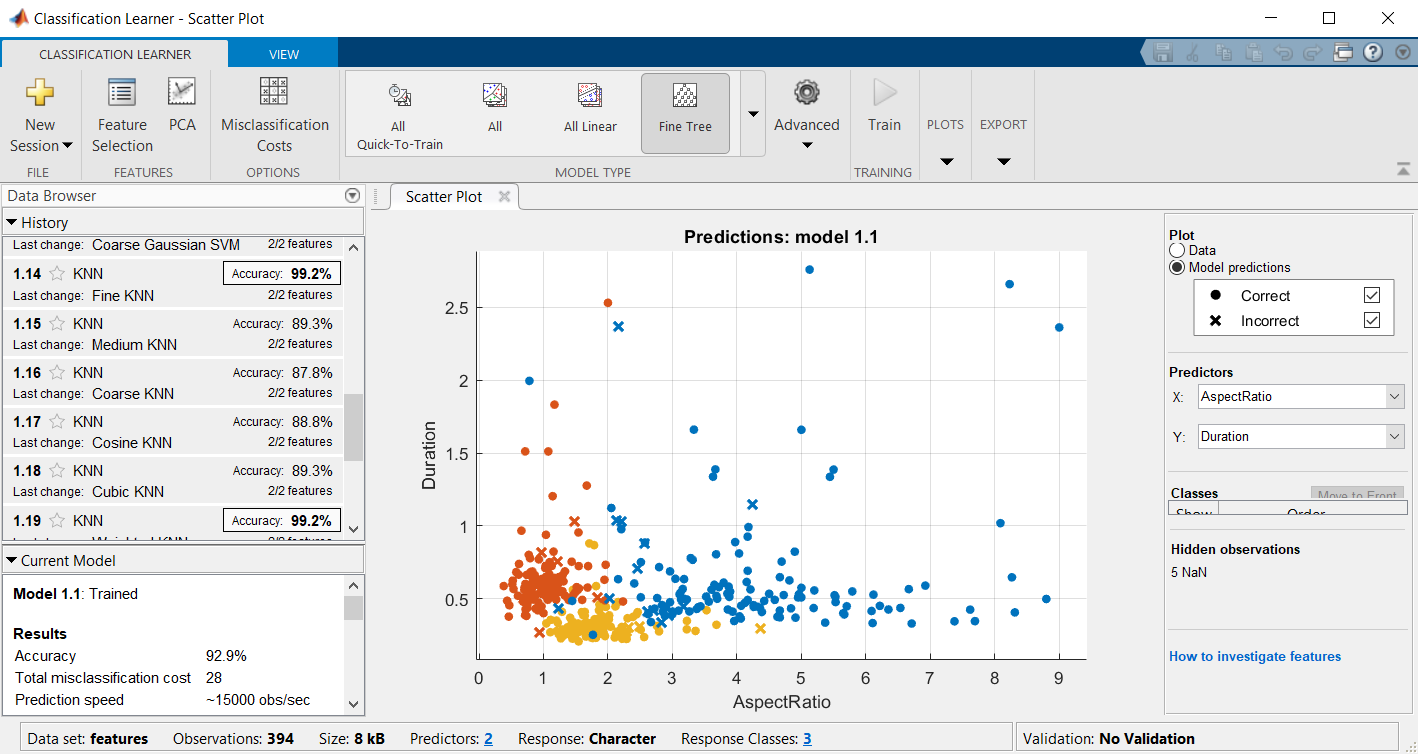

7) We can see the training accuracy results for 24 models on the left side. And there is the scatter plot in the center for the model predictions for the selected model.

8) There is an icon called plots that has the following options.

9) We can now select the top accuracy model. Here we have Fine KNN and Weighted KNN having the highest accuracy of 99.2%. Click on the Export option, and I have exported each of it to the workspace as trainedModel and trainedModel1. Export has the following options:

10) We can test these two models on the testing data and check the accuracy to find the best model.

After exporting, the command window will look like this:

The trainedModel.predictFcn(readtable(“features.xlsx”)) command will be used to get the prediction output of the exported model. The following code is used to find the accuracy of the model.

testdata=readtable("testdata.xlsx")

predictions = char(trainedModel.predictFcn(testdata))

% accuracy

iscorrect=predictions==cell2mat(string((testdata.Character)))

iscorrect=iscorrect(:,2)

accuracy = sum(iscorrect)*100/20

Similarly, we have to try for the remaining exported model also. Finally, we get the testing accuracy of 90% and 85% for Fine KNN and weighted KNN models. Hence, we can use Fine KNN as it is the best model for this dataset. Thus, in this way, we will find out the best model for the dataset in less time. When we directly coded using the KNN model, we got an accuracy of 80%. Using the app, we were able to identify the best model quickly and got a better accuracy of 90%.

Get instant access to the code, model, or application of the video or article you found helpful! Simply purchase the specific title, if available, and receive the download link right away! #MATLABHelper #CodeMadeEasy

Ready to take your MATLAB skills to the next level? Look no further! At MATLAB Helper, we've got you covered. From free community support to expert help and training, we've got all the resources you need to become a pro in no time. If you have any questions or queries, don't hesitate to reach out to us. Simply post a comment below or send us an email at [email protected].

And don't forget to connect with us on LinkedIn, Facebook, and Subscribe to our YouTube Channel! We're always sharing helpful tips and updates, so you can stay up-to-date on everything related to MATLAB. Plus, if you spot any bugs or errors on our website, just let us know and we'll make sure to fix it ASAP.

Ready to get started? Book your expert help with Research Assistance plan today and get personalized assistance tailored to your needs. Or, if you're looking for more comprehensive training, join one of our training modules and get hands-on experience with the latest techniques and technologies. The choice is yours – start learning and growing with MATLAB Helper today!

Education is our future. MATLAB is our feature. Happy MATLABing!

how to visualize test ?

Interesting blog.

Good concept and interesting blog.

Undefined function ‘string’ for input arguments of type ‘cell. What do i do about this pls?

tanu

it is good for classification for algorithm based machine learning …by direct importing excel or any data